AFF: Your Betting Broker Specialist

Betting on sports has become a popular pastime for many people around the world. And with the advent of online betting, it has become even easier to place a bet on your favorite team or player. But what exactly is a sports betting broker?

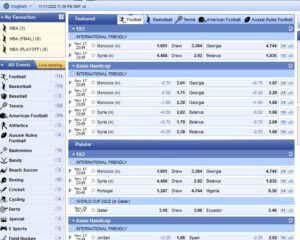

A sports betting broker is a middleman who helps you place your bet with a bookmaker. Brokers typically work with multiple asian bookmakers and can help you find the best odds for the game you want to bet on. they can also help you with other aspects of sports betting, such as choosing a betting strategy or managing your bankroll.

If you’re interested in placing a bet on a particular game, an asian betting broker can be a valuable resource. They can help you find the best odds and make sure you’re getting the most out of your sports betting experience.

Why should you use a betting broker?

There are many reasons why you might want to use a bet broker. A bet broker can save you time and money by finding the best odds for you. They can also offer you advice on what to bet on and how to manage your money. Bet brokers are experts in the field of gambling, so they can help you make the most of your money.

If you are thinking about using a bet broker, then there are a few things you should keep in mind. First, bet brokers typically charge a commission for their services. This commission is usually a percentage of the amount you bet. Second, you should only use a bet broker if you are serious about gambling. Bet brokers can offer you a lot of advice, but they can also take your money if you’re not careful.

- A bet broker can help you place bets on a variety of different sporting events.

- A sport betting broker can help you manage your betting bankroll.

- A sport broker can give you advice on how to best use your money.

- A bet agent can provide you with valuable insights into the world of betting.

- A bet brokerage can help you connect with other bettors and form a betting syndicate.

Advantages:

- Better Odds: Bet brokers often have access to better odds than traditional bookmakers. This is because they are not limited by the same restrictions as traditional bookmakers.

- Highest limits: You can bet big money without limitation.

- Wider Range of Events: As mentioned above, bet brokers cover a wide range of events. This means that you can place bets on a wide variety of sports and events.

- Reduced Risk: Betting with a broker can reduce the risk of losing money. This is because you can spread your bets across a number of different events and multiple bookmakers, which reduces the chance of losing your entire bankroll on one bet.

- Skype Betting: for high punters, brokers allow to take bets directly on Skype or Telegram.

Disadvantages:

- Bet brokers take a small fee.

- You may not get your winnings as quickly as you would if you placed the bet yourself.

- Bet brokers may not be available in all countries.

How does a sport broker work?

A betting broker is a middleman that connects bettors with bookmakers. Betting brokers allow bettors to place bets with multiple bookmakers without having to create separate accounts with each one. This can be beneficial for a number of reasons. For starters, it can be difficult to open an account with a bookmaker if you live in a country where online gambling is restricted. Betting brokers can help you bypass these restrictions by placing your bet for you. Betting brokers can also help you get better odds on your bets. By shopping around with multiple bookmakers, a betting broker can find the best odds for your bet and help you get the most bang for your buck.

What are the best bet brokerage services?

In my opinion, after years of practice, here is my selection:

1. BetInAsia

Betinasia is a leading betting broker that offers a wide range of services to its clients. They offer a simple and easy-to-use platform that makes online betting convenient and fun. They also offer a wide range of markets, competitive odds, and a secure and safe environment for all our clients.

Their offer is divided in two parts:

- A dedicated software (based on Mollybet) to find best odds in real time through the best asian bookmakers. This offer is called BLACK.

- Acces to betfair betting exchange market, with lower fees. This offer is called ORBIT EXCHANGE

BetInAsia is a very professional partner you can rely on. They have numerous payments options and I always been able to withdraw. The first withdraw of the month is free of charge.

More details about BetInAsia offer here.

2. AsianConnect

AsianConnect is a trusted online betting and asian sport broker that offers a safe and secure way to place bets on your favourite sports and games. They provide access to a wide range of online betting and gaming sites, and our experienced team is on hand to help you find the best odds and prices. They also offer a range of features and services to help you get the most out of your betting experience, including live streaming, in-play betting, and cash out.

Their offer can be summarised as:

- Access to major asian bookmakers in one place (including Pinnacle) and possibility to use they dedicated tool ASIANODDS for real time betting at better odds,

- Access to ORBITX & PIWI for betting exchange

AsianConnect is the oldest broker still in business. This operator can be trusted. I have always received my payments but the withdrawal facilities are more limited than with other brokers

More details about AsianConnect offer here.

3. SportMarket

SportMarker offers competitive odds on a wide range of sports betting markets. This means that you can get the best value for your bets when you use SportMarker. Lastly, SportMarker offers a wide range of payment and withdrawal methods, making it easy for you to get your winnings.

At Sportmarket they almost offer the same services as the previous ones, with a dedicated Sportmarket PRO betting platform based on Mollybet. The means of payment are numerous with to no deposit fee and 1 free withdrawal per month. But this offer is more dedicated to professional bettors with a minimum deposit of 100€ and commissions on betting exchanges are a bit higher than others. But they grant access to Pinnacle, BetDAq, SBObet, betfair, BetISN, Matchbook, SingBet, Smarkerts…

More details about SportMarket offer here.

Is using a bet agent expensive?

Bet brokers are people who earn a commission by organising bets between punters (people who place bets) and bookies (companies that take bets).

The way it works is that the bet broker will find someone who wants to place a bet on a certain outcome, and then find a bookie who is willing to take that bet. The bet broker will then take a cut of the winnings from the bet as their commission.

Is using a bet broker legal?

Such intermediaries are not concidered as sport betting operators. So they are not subject to local sport betting laws, and so they do not require special agreement. But they all possess a Curaçao betting licence.

On the other side, as you, as a bettor, are not directly placing a bet (the broker is), you are not considered an bettor, ans so not subject to local sport betting regulation.